There is a slight performance penalty for cache modifications when using the distributed cache backups however it guarantees that if a cluster node were to unexpectedly fail, that data consistency is maintained and no data is lost.įailover of a distributed cache involves promoting backup data to be primary storage. Modifications to the cache are not considered complete until all backups have acknowledged receipt of the modification. The backup count only affects the performance of cache modifications, such as those made by adding, changing or removing cache entries. If the cache were extremely critical, a higher backup count, such as two, could be used. (The default backup count setting is one.) If the cache data were not critical, which is to say that it could be re-loaded from disk, the backup count could be set to zero, which would allow some portion of the distributed cache data to be lost if a cluster node fails.

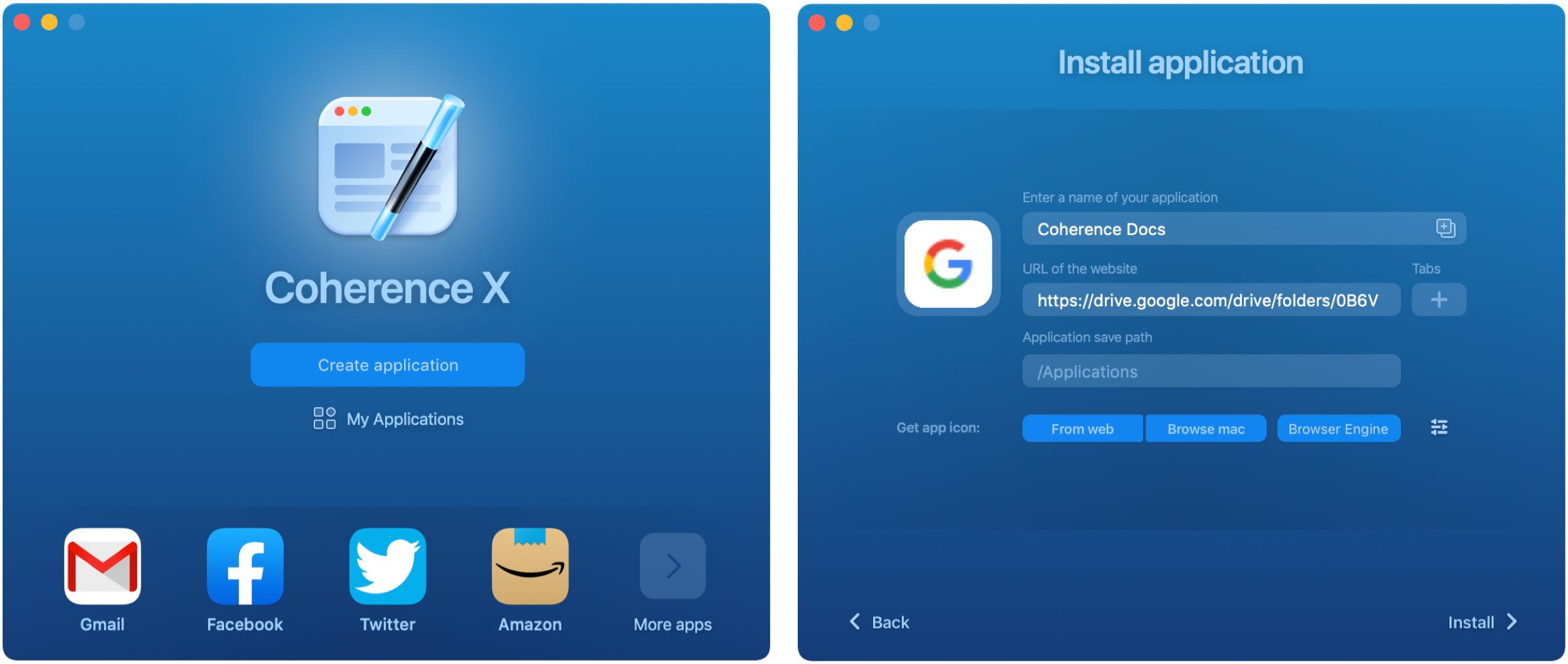

This is for failover purposes, and corresponds to a backup count of one. In the figure above, the data is being sent to a primary cluster node and a backup cluster node. All other things equals, if there are n cluster nodes, (n - 1) / n operations go over the network:įigure 11-1 provides a conceptual view of a distributed cache during get operations.įigure 11-2 Put Operations in a distributed Cache Environmentĭescription of "Figure 11-2 Put Operations in a distributed Cache Environment"

The distributed cache service allows the number of backups to be configured if the number of backups is one or higher, any cluster node can fail without the loss of data.Īccess to the distributed cache often must go over the network to another cluster node. This is called location transparency, which means that the developer does not have to code based on the topology of the cache, since the API and its behavior is the same with a local JCache, a replicated cache, or a distributed cache.įailover: All Coherence services provide failover and failback without any data loss, and that includes the distributed cache service. Location Transparency: Although the data is spread out across cluster nodes, the exact same API is used to access the data, and the same behavior is provided by each of the API methods. Load-Balanced: Since the data is spread out evenly over the servers, the responsibility for managing the data is automatically load-balanced across the cluster. Write operations are "single hop" if no backups are configured. Also, it means that read operations against data in the cache can be accomplished with a "single hop," in other words, involving at most one other server. The size of the cache and the processing power associated with the management of the cache can grow linearly with the size of the cluster. Partitioned: The data in a distributed cache is spread out over all the servers in such a way that no two servers are responsible for the same piece of cached data. There are several key points to consider about a distributed cache: Distributed caches are the most commonly used caches in Coherence.Ĭoherence defines a distributed cache as a collection of data that is distributed across any number of cluster nodes such that exactly one node in the cluster is responsible for each piece of data in the cache, and the responsibility is distributed (or, load-balanced) among the cluster nodes. For fault-tolerance, partitioned caches can be configured to keep each piece of data on one or more unique computers within a cluster. Data is partitioned among all storage members of the cluster. A distributed, or partitioned, cache is a clustered, fault-tolerant cache that has linear scalability.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed